Most CPA firms think of quality control as "the review." A senior accountant or partner looks at the work, checks it, and signs off. That works when all the work is done in-house by people you have trained for years. It does not work when you add an offshore team to the mix, at least not by itself.

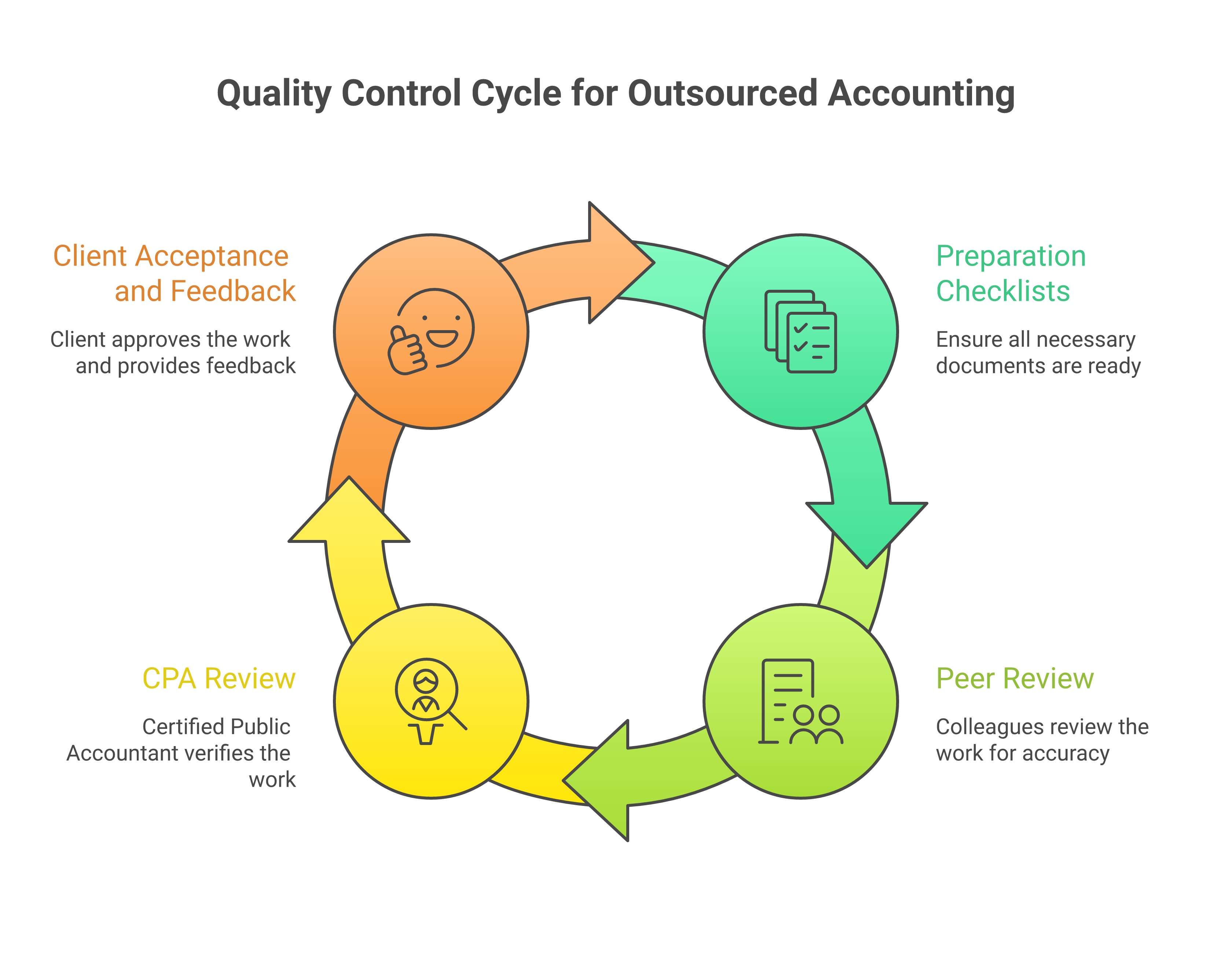

When you outsource accounting work, you need a QC system with multiple layers. Each layer catches different types of errors at different stages. The goal is not to catch everything at the final review. The goal is to catch most issues before they reach the final reviewer, so the CPA's time is spent on judgment calls and client-facing decisions, not hunting for data entry mistakes.

We have built and refined this QC system across hundreds of CPA firm engagements at Madras Accountancy. Our quality control guide for outsourced accounting covers the philosophy. This article is the practical setup guide, covering exactly what to build and how.

The first quality control layer happens before any review. It is built into the preparation process itself.

Every work type gets its own preparation checklist. A monthly bookkeeping close checklist is different from a tax return preparation checklist, which is different from a payroll processing checklist. Generic checklists that try to cover everything end up covering nothing well.

A good preparation checklist has three characteristics. It is specific enough that the preparer can verify each item without judgment calls. It is short enough that it actually gets completed (20 to 30 items maximum). And it is version-controlled so updates are tracked and every preparer is using the current version.

Here is what a monthly bookkeeping close checklist looks like in practice. Bank reconciliations completed for all accounts, with zero unreconciled items over 30 days. Credit card reconciliations completed and matched to statements. Accounts receivable aging reviewed and items over 90 days flagged. Accounts payable reviewed for duplicate entries. Revenue recognized per the client's recognition method (cash or accrual). Payroll entries posted and reconciled to payroll reports. Depreciation entries posted per the fixed asset schedule. Prepaid expenses amortized for the current month. Accrued expenses reviewed and adjusted. Intercompany entries posted and balanced (if applicable). Trial balance reviewed for unusual balances (negative assets, positive contra accounts, suspense account balances). Prior month adjusting entries reversed if applicable.

Each item has a yes/no/NA response. The preparer completes the checklist as part of the work, not as an afterthought. The completed checklist is submitted with the deliverable. If any item is marked "no," there must be a note explaining why.

This layer catches 40 to 50 percent of common errors before any human review happens. Missed reconciliations, forgotten entries, skipped steps. The checklist forces completeness.

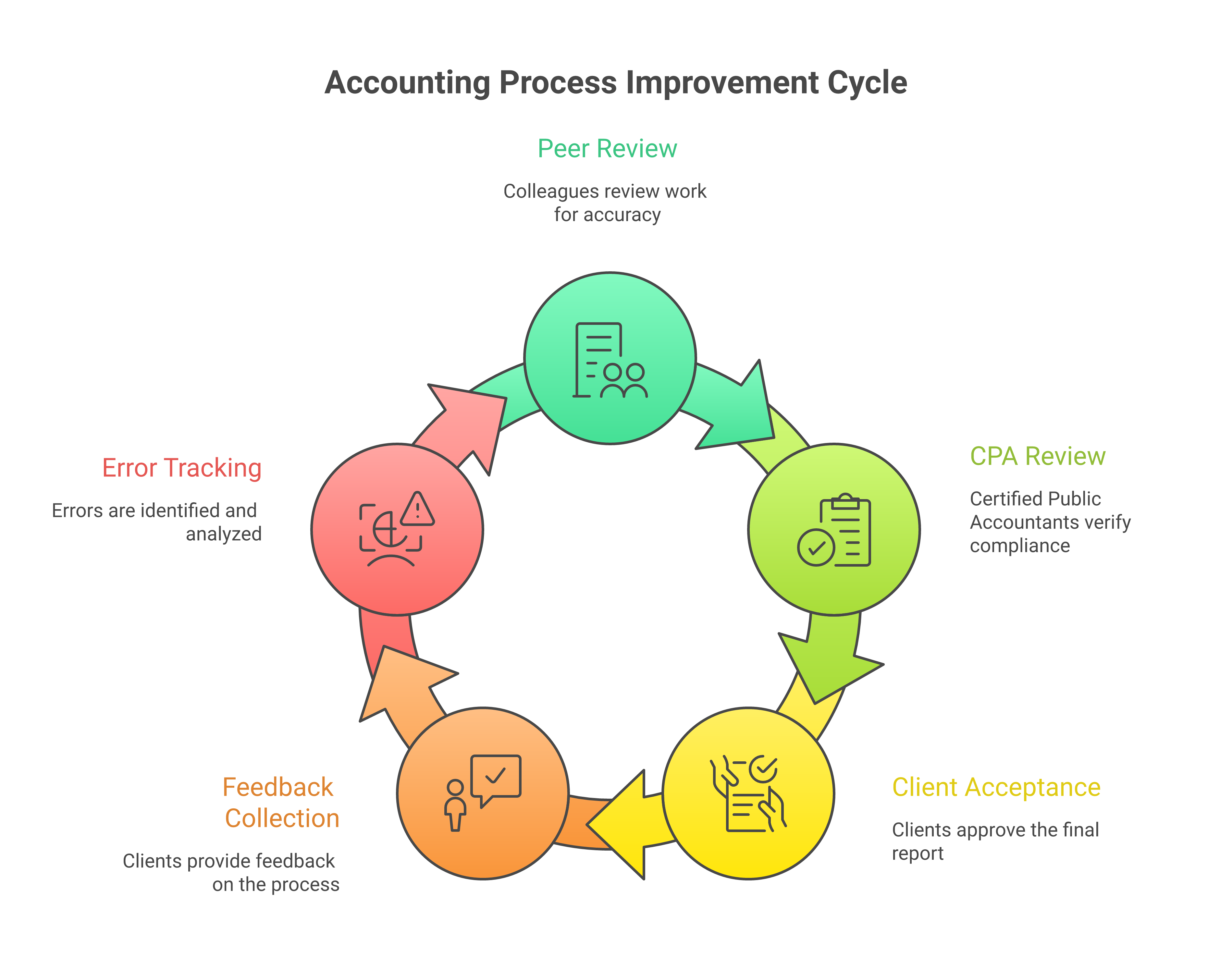

The second layer is a peer review by another member of the offshore team. This is not a full re-preparation. It is a targeted review by a second set of eyes, focused on the areas most likely to contain errors.

The peer reviewer checks several things. Do the numbers tie? Does the trial balance balance? Do subledger totals match general ledger control accounts? Do bank reconciliation ending balances match the GL? Are there any obvious anomalies, such as revenue that is 50 percent higher than the prior month with no explanation, or an expense account with a credit balance? Is the preparation checklist complete and accurate?

Peer review adds 15 to 20 minutes per engagement at the bookkeeping level and 30 to 45 minutes per return at the tax level. That is a small time investment for the errors it catches. In our tracking data, peer review catches an additional 15 to 20 percent of errors that the preparation checklist missed.

Not every firm uses peer review. For smaller engagements or simple work types, some firms go directly from preparation to CPA review. But for complex work (multi-entity bookkeeping, business tax returns, audit support), we strongly recommend it. The cost of a peer reviewer's time is a fraction of the cost of a CPA partner finding basic errors in what should be review-ready work.

This is the layer that most firms already have. A licensed CPA or senior accountant reviews the completed work before it goes to the client. The difference in a well-designed QC system is that the CPA reviewer is not looking for data entry mistakes or missing reconciliations. Those should be caught in layers 1 and 2.

The CPA reviewer focuses on judgment areas. Is the accounting treatment correct for unusual transactions? Are tax positions supportable? Does the financial data tell a story that makes sense for this client's business? Are there any items that need client discussion before finalizing?

Structured review notes make this layer more effective. Instead of free-form comments, we use a standardized review note format that includes the item description, the specific issue, the severity (critical, moderate, minor), and the action needed (fix, discuss with client, or note for next period).

This structure serves two purposes. It gives the offshore preparer clear instructions on what to fix. And it creates data that feeds into the error tracking system (layer 5), which is how you measure quality over time.

The reviewer's time per engagement should decrease as the relationship matures. In the first month of a new engagement, a CPA might spend 2 hours reviewing a monthly bookkeeping package. By month six, that should be down to 30 to 45 minutes because the preparer has learned the client's nuances and layers 1 and 2 are catching the routine issues.

The fourth layer is often overlooked but it matters. After the CPA reviews and approves the work, the client receives it. Client feedback, both direct and indirect, is a quality signal.

Direct feedback is when the client calls and says "this number looks wrong" or "you missed a transaction." Indirect feedback is when the client stops asking questions, which usually means the work is consistently meeting expectations.

We track client feedback as part of our QC system. Every client inquiry that results in a correction is logged. These corrections tell you something that the internal review process missed. Maybe the reconciliation looked right but the client knows about a deposit that should have been recorded differently. Maybe the expense categorization was technically correct but does not match how the client thinks about their business.

Client feedback corrections should be rare (fewer than 2 per engagement per month in steady state) but when they happen, they get folded back into the preparation process. If a client repeatedly flags the same type of issue, that becomes a checklist item for that specific engagement.

Layers 1 through 4 catch individual errors. Layer 5 is about patterns. It is the system that turns individual error data into quality improvement.

Every error found at any layer gets logged in a tracking system with the following attributes. The engagement and client name. The error type (data entry, missed transaction, wrong account coding, wrong period, calculation error, omitted step, tax position error). The severity level. The layer where it was caught (preparation checklist, peer review, CPA review, client feedback). The preparer who made the error. The date.

With this data, you can answer questions that drive real improvement. Which error types are most common? Are certain preparers making the same mistakes repeatedly? Are errors concentrated in certain engagement types? Is quality improving over time or degrading? Are errors being caught earlier (layers 1 and 2) or later (layers 3 and 4)?

We review error tracking data monthly with each CPA firm client. The monthly quality report includes total errors by severity, error rate per engagement (errors divided by total engagements completed), first-pass acceptance rate (percentage of deliverables approved with no or minor revisions at CPA review), error type distribution, and trend lines comparing the current month to the prior three months.

This report is the single most important QC tool in the system. It replaces gut feelings ("I think the quality has been slipping") with data ("The error rate on partnership returns increased from 8 percent to 14 percent this month, driven by three state apportionment errors"). Our month-end close checklist shows how this kind of tracking works at the task level.

The error tracking data feeds into a KPI dashboard that gives both the CPA firm and our team a real-time view of quality. Here are the KPIs that matter most.

First-pass acceptance rate is the percentage of deliverables that pass CPA review with no revisions or only minor cosmetic revisions. Target: 85 percent or higher for mature engagements. New engagements typically start at 65 to 75 percent and improve over the first 3 to 6 months.

Critical error rate tracks errors that would result in incorrect financial statements or tax filings if not caught. Target: below 2 percent. If this number exceeds 5 percent for more than one month, it triggers a formal root cause analysis.

Turnaround time measures the elapsed time from assignment to delivery. This is a quality indicator because rushed work produces more errors. If turnaround times are consistently at the boundary of the SLA, the team may be overloaded.

Revision cycle time measures how long it takes to turn around revisions after CPA review. Target: same business day for minor revisions, 24 hours for significant revisions.

Client inquiry rate tracks how often clients identify issues after delivery. Target: fewer than 1 inquiry per engagement per month that results in a correction.

These KPIs are reviewed weekly during the first three months of an engagement and monthly thereafter. The dashboard itself can be as simple as a shared spreadsheet or as sophisticated as a purpose-built portal. The data matters more than the presentation.

A QC system without a feedback loop is just measurement. The feedback loop is what turns measurement into improvement.

Here is how the loop works at Madras. CPA review notes are returned to the preparer within 24 hours. The preparer reviews the notes and makes corrections. The preparer also reviews the error tracking log weekly to identify their personal error patterns. Monthly, the team lead reviews aggregate error data with each preparer, identifying trends and assigning targeted training where needed. Quarterly, we review the QC process itself with the CPA firm. Are the checklists still relevant? Do review criteria need updating? Are there new work types that need their own checklists?

This feedback loop is what separates firms that have a good first month and then plateau from firms that keep improving. Our guide to managing offshore accounting teams covers the management practices that keep this loop running.

The QC system described above can run on spreadsheets and email. Many firms start that way and it works fine at smaller scale. But as the engagement grows, purpose-built tools make the system more efficient.

Project management platforms (Karbon, Canopy, Jetpack Workflow, Financial Cents) track assignments, deadlines, and status. They replace email chains and shared spreadsheets for workflow management.

Document management (SharePoint, SmartVault, Citrix ShareFile) provides version-controlled storage for workpapers, checklists, and deliverables. Version control matters because you need to know which version the reviewer approved.

Communication tools (Teams, Slack) with dedicated channels per engagement keep questions and answers organized and searchable. Random email threads are the enemy of consistent quality.

Checklist tools can be embedded in the project management platform or run separately. The key requirement is that completed checklists are saved with the deliverable, not discarded after completion.

We integrate with whatever technology stack the CPA firm already uses. The QC system adapts to the tools, not the other way around. Our guide to choosing an outsourcing partner covers technology integration as one of the key evaluation criteria.

Making checklists too long is the most common mistake. A 60-item checklist for a simple bookkeeping close does not get completed honestly. Preparers start checking items without actually verifying them. Keep checklists focused on the 20 to 30 items that matter most and that correspond to the most common error types.

Skipping the peer review layer to save time usually costs more time in the end. Every error caught at the peer review level saves 3 to 5 times as much CPA review time. The math almost always favors adding peer review for complex work.

Not calibrating the review to the preparer's experience level wastes senior reviewer time. A preparer in their first month on a new engagement type needs a detailed review. A preparer who has handled the same engagement for two years needs a targeted review focused on changes and judgment areas. The review depth should adapt.

Treating QC as static means the system degrades over time. Clients change, regulations change, staff changes. The QC process needs regular updates. Quarterly reviews of checklists, review criteria, and KPI targets keep the system relevant.

If you already outsource accounting work and your QC system is informal ("the partner reviews everything"), you are probably spending too much partner time on things that a structured system would catch earlier. If you are considering outsourcing and worried about quality, a well-designed QC system is the answer.

At madrasaccountancy.com, quality control is built into every engagement from day one. We do not wait for problems to build a QC process. We start with the checklists, peer review, error tracking, and KPI dashboard already in place. If your firm wants to see what this looks like for your specific work types, reach out and we will walk you through it.

The initial setup takes 2 to 4 weeks. This includes building the preparation checklists for each work type, configuring the error tracking system, establishing review protocols, and setting up the KPI dashboard. The system continues to be refined over the first 3 to 6 months as real data comes in and checklists get calibrated to the specific engagement.

For new engagements, expect 65 to 75 percent in the first month, improving to 80 to 85 percent by month three, and reaching 85 to 90 percent by month six. Engagements that consistently stay below 80 percent after six months have a structural issue (unclear instructions, scope creep, preparer mismatch) that needs to be addressed directly.

It adds 15 to 45 minutes per deliverable depending on complexity. We build this into our turnaround time commitments, so the SLA accounts for the peer review step. The net effect on the CPA firm is usually positive because the CPA reviewer spends less time on the deliverable when peer review has already cleaned up common issues.

Our error tracking identifies preparer-specific patterns. When a preparer shows a recurring error type, we assign targeted training on that specific area. If the pattern persists after training, we reassign the preparer to a different work type that better matches their strengths or replace them on the engagement. The CPA firm is kept informed throughout this process.

Yes, and we recommend it. A large client with complex multi-entity accounting needs more rigorous QC than a small single-entity bookkeeping client. We tier QC intensity based on engagement complexity, client sensitivity, and historical error rates. High-risk engagements get mandatory peer review and detailed CPA review. Lower-risk engagements may skip peer review and use a streamlined CPA review.

Learn how CPA firms can price advisory and CAS services more profitably by using offshore support for bookkeeping, close, reporting, and production work.

Learn how CPA firms can train offshore accounting teams on workflows, review standards, software, communication rules, and quality expectations.

A practical quality control checklist for CPA firms using outsourced tax preparation, covering scope, documents, workpapers, review, and feedback.